I have a stats question on linear regression.

In working with my spreadsheet, I was looking for a predictive relationship between several "independent" (X) variables (for ES) to one "dependent" (Y).

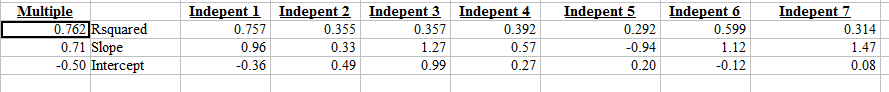

A simple regression between X1 and Y gave R-squared=0.757.

A multiple regression (X1,X2, X3, X4, X5, X6, X7 to y gave R-squared=0.762.

1. Does this mean that the information gain of x2..x7 was almost nothing (and x1 is giving the bulk of the information)?

2. Should I be applying any of the functions below, and if yes, what help would they give?

------------------------------------

@CORREL calculates the correlation coefficient of values in range1 and range2.

@COV calculates the covariance of the values in two ranges.

@RSQ calculates the square of the Pearson product moment correlation coefficient. @

fisher calculates the Fisher transformation of a value. @

fisherINV calculates the inverse of the Fisher transformation.

Notes:

Correlation and covariance both measure the relationship between two sets of data. However, the correlation statistic is independent of the unit of measure, while the covariance statistic is dependent on the unit of measure.